The FY25 AI inventory from the U.S. Department of Homeland Security shows no shortage of activity. What it reveals instead is a department that is experimenting broadly with artificial intelligence while still working to bring those efforts together into a more coherent enterprise approach.

AI now appears across DHS components, mission areas, and functional domains. From operational support and intelligence analysis to administrative functions and research, the inventory reflects a department where AI experimentation has become common. Just as importantly, the FY25 inventory shows progress in how DHS documents and evaluates these efforts. Compared to earlier inventories, the department is doing a better job distinguishing between exploratory pilots and operational deployments, and it more consistently acknowledges risk considerations, oversight mechanisms, and intended use cases.

That increased transparency is a meaningful step forward. Large federal departments often struggle to maintain visibility into emerging technologies that develop independently across components. The DHS inventory suggests that leaders are trying to build a clearer picture of where AI is being used and how it is evolving across the enterprise.

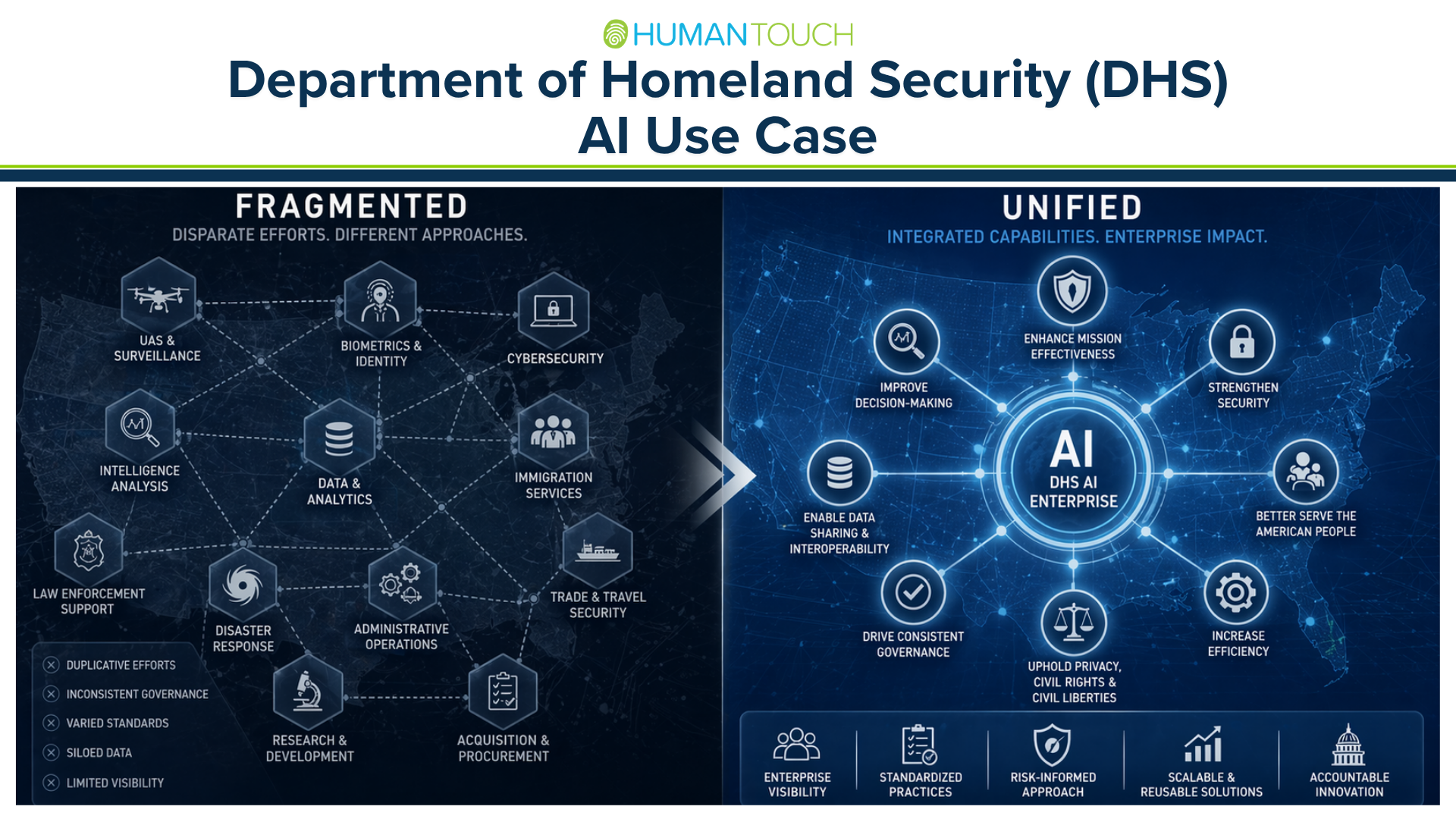

At the same time, the document also highlights a structural challenge that is common in large, mission-driven organizations. When read end to end, the inventory feels less like a single coordinated portfolio and more like a collection of parallel initiatives. Similar capabilities appear across multiple components, often developed independently and with different governance structures.

In some areas, components appear to be adopting commercial AI tools and analytics platforms with relatively mature oversight frameworks. In others, the same types of capabilities are emerging through internal development or smaller pilots with more informal governance. Procurement approaches vary. Risk assessments are handled differently. Practices that appear well established in one part of the department look more ad hoc in another.

This kind of fragmentation is not unusual during early technology adoption cycles. Innovation often happens at the edges of large organizations before it becomes standardized. But for AI in particular, fragmentation can create real operational and governance challenges. When systems are built independently, opportunities for reuse and shared learning are easily missed. Oversight practices become inconsistent. And the department risks duplicating investments in tools that could otherwise serve multiple components.

The stakes are especially high at DHS because many AI applications operate in mission-critical environments and in areas that directly affect the public. Systems that support border operations, cybersecurity, immigration services, disaster response, and law enforcement carry significant operational and civil liberties implications. Ensuring that these tools are governed consistently and integrated thoughtfully is essential.

The FY25 inventory ultimately makes clear that DHS’s next challenge is not identifying new AI use cases. The department already has many of them. The more important task now is determining which capabilities should scale, how they connect across components, and who is responsible for managing them as part of a coordinated enterprise system.

If DHS can move from experimentation to integration, the department will be far better positioned to harness AI’s benefits while maintaining the oversight, accountability, and operational discipline that its mission requires.

DHS AI Use Case Inventory:

https://www.dhs.gov/publication/ai-use-case-inventory-library